The lie about TCO in ecommerce

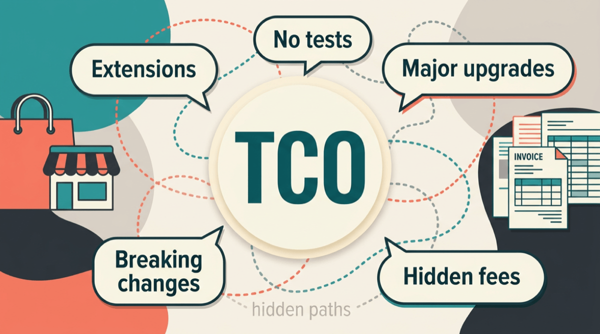

The lie is simple: we talk and buy as if the launch quote were the full price. It is not. The real money is everything after go-live: small fixes, upgrades, “before Black Friday,” extensions fighting each other, and the hours you pay because nobody dares deploy without a ritual.

This is for merchants, agency leads, developers, and anyone who has to explain why year two costs more than the spreadsheet implied. Slides still love the letters TCO . Real life loves the first number and the go-live date. If we keep pretending the rest does not count, we stay shocked when it hurts.

For me TCO is one question. What does it cost to run the shop and change the shop over years? Hosting and licenses matter. The bigger line item is almost always people and risk. If your team is scared to deploy, you are not “saving money.” You are paying in stress and big bang releases.

I spend a lot of time in Shopware land. Same story in Magento , Shopify with apps, or any stack where extensions pile up and nobody owns the map.

People buy the launch, not the total costLink to this section

Customer needs a number that fits the budget. Agency needs to win the work. Nobody puts a line in the offer that says “money for tests” or “money for upgrade safety.” Those things are invisible on day one. So they get cut first. Not always with bad intent. Often because nobody knows how to sell “nothing you can click.”

You sold “we go live.” Going live is real work. Keeping the thing safe to change is also real work. When the second part was never bought, expect pain when Shopware (or the platform) moves and something breaks and nobody knows which extension did it.

I am not here to say customers are dumb or agencies are evil. The incentive points at a cheap start. Three years later everyone is mad about the estimate for an update. That estimate is often not greedy. It is the bill for skipping verification and piling on shortcuts. Without real checks, releases turn into manual clicking and praying. Upgrades wait. “Small” changes take days because nobody knows the blast radius. Plugin updates become incidents. That is not bad luck. That is what you get when checking change is expensive.

Tangle, upgrades, and the bill nobody mappedLink to this section

You do not need a deep tech sermon. You need five different vendors touching checkout, search, discounts, ERP sync, and “one small SEO thing.” Each piece made sense in the meeting. Together they are a tangle. In Shopware it is plugins and theme overrides. In Magento it is modules and preferences. On Shopify it is apps and theme snippets. Different packaging, same headache. Something updates. Something else breaks. Nobody has a map.

The license or monthly fee is not the whole story. The story is who integrates this mess on every update, who argues with third party support, who finds out two extensions both thought they owned the same hook. That time is TCO .

Headless and APIs can help draw a line: our surface, their platform. It does not delete work. If you hide ten conflicts behind a new repo and still have no tests, you moved the mess. The win is boundaries you can test, not a buzzword.

A shape I have seen more than once (details anonymized): mid-sized shop, fourteen third-party extensions, no test suite you would bet revenue on, minor platform updates skipped until security or a payment change forced the issue. The jump that could have been a steady rhythm turned into weeks of discovery, finger-pointing between vendors, and re-testing checkout. I am not publishing someone else’s invoice. I am saying the pattern is real. Compare that to a team with the same rough complexity but green CI and a habit of small releases. Their upgrades are still work. They are not a blindfolded marathon.

Extension sprawl is one half. The other half is the platform moving: Shopware x to y, Magento major, PHP bumps, API deprecations, theme engine changes. Release notes get long. “Optional” becomes “you really should” and then “we no longer support.”

Breaking changes are not only renames in code. They show up as data migrations, checkout flows, search, admin workflows, integrations that relied on old hooks, third party plugins that lag behind the core for months. The work is discovery (what do we even use), alignment (vendor roadmaps), testing (did we fix one thing and break three), rollback planning. AI can speed up some typing. It does not remove risk or coordination.

So are major upgrades still that hard now that AI can spit out patches and migrations? Easier than five years ago on the keyboard, yes. Cheaper as a total project, not automatically. You still need someone who knows your shop, your extensions, your data. You still need proof nothing critical died. If the only plan is “paste errors into a model until it stops complaining,” you are gambling with revenue.

Where AI helps on upgrades: boilerplate, first drafts of diffs, changelog summaries, test case ideas, checklists. Where it does not replace humans: judgment calls, rules nobody wrote down, incidents at 9pm, arguing with a plugin vendor whose roadmap does not match yours. TCO for an upgrade is those hours plus opportunity cost if you freeze features while the team fights the jump.

If we sell ecommerce long term, we need a kitLink to this section

Here is mine, in plain language.

- Inventory. Know what is installed, what is custom, what is mission critical. One page or doc the client signs off on, updated when something new goes in.

- Supported path. Agree how far behind core you are allowed to drift and what happens when security needs a bump. No fairy tales about “we never upgrade.”

- Environments. Staging that is not a joke. Data that is close enough to prod that checkout and integrations mean something. Anonymized if needed.

- Automated checks. Unit on your rules, integration on boundaries, end to end on money paths. CI green before merge. Same pipeline before “upgrade weekend.”

- Release habit. Small deploys often beats hero saves once a year. Upgrades hurt less when the team is not rusty.

- Extension policy. Prefer fewer, owned seams. Say no to the tenth plugin that overlaps. When the client insists, write down that the next major upgrade may cost more. That protects everyone.

- Runbook. How to roll back, who to call, what to monitor the first 48 hours after go live on the new version.

- Budget line. Sell upgrade readiness the same way you sell design: a small recurring bucket or a yearly “version bump” chunk. If it is always zero until the crisis, TCO explodes and the relationship sours.

Extensions make the graph messy. Major versions move the ground under the graph. AI is a power tool, not a warranty. The agencies that will do fine built habits and rails so upgrades are boring most of the time.

SaaS looks cheap in the ad. The spreadsheet says something else.Link to this section

Packaged SaaS ecommerce sells a simple story: sign up, pick apps, go live. The sticker is rarely the whole TCO . You still stack a platform plan, per-order payment fees, paid add-ons, theme work, integrations, and internal or agency time. That is not a dig at one product. It is the shape of the market.

Everything in the table below is made up. Swap in your real plan, fees, apps, and hours. The point is to put cash out the door on one sheet and look at it next to gross margin, not to pretend this is anyone’s audit.

Toy shop, example year

| Line | Example € / year |

|---|---|

| Platform plan (example tier) | 27,600 |

| Payment fees (example blend on card volume) | 87,000 |

| App subscriptions (example stack) | 11,280 |

| Theme (example, amortized) | 1,000 |

| Internal ops and fixes (example hours × rate) | 43,200 |

| Agency retainer (example) | 60,000 |

| Example subtotal | 230,080 |

Rough revenue in this toy: about €3.6M sales in the year (round numbers). Gross margin means revenue minus cost of goods, before ads and salaries. Real P&Ls vary. Say that out loud.

- At about 45% gross margin, gross profit is about €1.62M. That €230k example stack is about 14% of gross profit. Plan plus fees alone, before some rows, land around 7% of gross profit in this same toy.

- At about 28% gross margin, same euro fees are a much larger bite of the profit pool. Same cash, thinner margin, different pain.

The lesson is not “pick vendor X.” The lesson is TCO is a spreadsheet habit, not a feeling. If you want investor-grade splits, break out interchange vs platform on the statement. For a sanity check, cash out the door on the stack is enough.

AI makes drafts cheaper. It does not move liability.Link to this section

AI will not remove judgment. It speeds up drafting: code, config, copy, migrations, boilerplate. Good if you have rails. Without rails you ship mistakes faster.

For years time and materials assumed “effort” looked like hours at the keyboard. Coding agents and copilots shorten that slice. If the client still buys hours, someone asks why the ticket took half the time, or wants a lower rate because “the robot helped.” The expensive part was never typing. It was context, review, plugging into a messy stack, and owning production when checkout breaks.

More teams now price outcomes or sprints with a real definition of done. Some add a line for tooling and CI . Some sell a retainer for hygiene and upgrades. Tokens and seats cost money. Put that in margin math. Do not race to the bottom on hourly rate because a model helped type. You are not renting fingers. You are buying a safe path to change in production. Agents change how fast drafts show up. They do not move liability or judgment or TCO off the person who signs the deploy.

Specs stay boring on purpose: what “done” means, what is out of scope, what must not break. When AI drafts implementation, that text is what you review against. At the agency it usually means shared docs, decisions next to the code ( markdown , ADRs if you use them), and a definition of done that includes green CI , not only “merged to main.”

Each sprint should make the next one easier, not harder. That is the heart of compound engineering: capture what you learned (patterns, checklists, how the team and tools work) so the same mistake costs less next time. Most real value sits in planning and review, not in raw typing. It lines up with selling a system that gets cheaper to change, not a pile of one-offs nobody documented.

flowchart LR

subgraph shared [Shared loop]

spec[Spec and acceptance]

ci[CI tests and review]

ship[Ship to staging or prod]

learn[Compound learnings]

end

client[Client product and budget]

agency[Agency delivery]

client --> spec

spec --> agency

agency --> ci

ci --> ship

ship --> client

ship --> learn

learn --> specSame loop as the paragraphs above: client goals, written specs, automation, small ships, feed learning back in.

Without tests, agents are a lucky game, not engineeringLink to this section

This is the part worth saying loud once.

Look, engineering means you can change the system and you have a clue what broke. Coding agents do not change that. They only make the draft cheaper.

If nothing automated proves behavior matches the spec, call it what it is. You ship plausible output. You wait for pain. You patch. That is a luck loop, not a discipline. The shop can look modern because AI helped. It stays fragile because nobody can honestly say green in CI means safe in prod.

So the pyramid is not decoration under a flashy agent demo.

- Unit tests are for your rules. Tax edges, pricing edges, the small pure bits where a silent wrong answer costs money.

- Integration tests are for seams. Your module against the database, the bus, the payment sandbox, a stubbed ERP. That is how you catch “works on my machine” and bad data.

- End to end tests are for the journeys you actually sell. Checkout, login, the flows where your code sits next to the platform and a bunch of extensions. When AI touched the UI, or when half the plugins fight over the same hook, this layer pays for itself. Models hallucinate . Looks-right code is still allowed to be wrong.

If you skip the pyramid to “move faster” with agents, you are not winning. You are piling up fast surprises. The teams that last treat tests and small releases as part of the product. Same as the homepage. Not as optional polish after the model went quiet.

What to do next (short list)Link to this section

- Put tests and upgrade cadence in the offer or statement of work . If it is not bought, say so in writing.

- Count extensions like you count employees. Each one needs an owner and an update story.

- Do a yearly TCO sheet: plan, fees, apps, labor, agency, incidents. Even rough numbers change the conversation.

- Keep CI honest. You merge when the checks say you can. No sermon, just habit.

- Prefer small releases over hero saves. Boring operations beat exciting outages.

The lowest bid is not the lowest cost over five years. Cheap launch, expensive life: fees, extensions, upgrades, and fear of deploy. AI speeds typing. It does not replace proof, boundaries, or the spreadsheet next to margin.